Introduction

Naturalism—the philosophical position that reality consists entirely of natural entities governed by natural laws—presents itself as the most rational and empirically grounded worldview. Yet despite its scientific veneer, naturalism suffers from foundational incoherence that undermines its viability as a comprehensive philosophy.

This critique demonstrates that naturalism is self-defeating, arbitrarily restrictive, explanatorily inadequate, and internally inconsistent. Each of these failings stems not from temporary limitations in scientific knowledge but from structural contradictions within naturalism itself. Together, they render naturalism philosophically untenable and point toward the necessity of a more pluralistic metaphysical framework.

Premise 1: Self-Defeating Foundations

Naturalism’s first fatal flaw lies in its inability to justify its own foundations without circularity or special pleading.

Scientific inquiry rests on several non-empirical assumptions that cannot be empirically verified: the reliability of human reason, the uniformity of nature, the correspondence between our perceptions and external reality, and principles like logical consistency and parsimony. These assumptions cannot be proven through scientific methods—they are preconditions for scientific inquiry itself.

This creates an insurmountable problem for naturalism. If reality consists entirely of natural entities governed by natural laws, then human cognition is merely the product of evolutionary processes that selected for survival value, not truth-tracking capacity. As philosopher Alvin Plantinga argues, if our cognitive faculties evolved primarily for reproductive fitness rather than truth detection, we have no reason to trust them for accurately grasping metaphysical truths like naturalism itself.

The naturalist might counter that evolutionary adaptiveness correlates with truth-tracking, particularly regarding immediate environmental threats. But this defense fails to bridge the gap between adaptive perceptual reliability and justified abstract metaphysical beliefs. There is no evolutionary advantage to having accurate beliefs about quantum mechanics, consciousness, or cosmic origins. Natural selection has no mechanism to select for metaphysical accuracy.

This creates what philosopher Thomas Nagel calls an “intolerable conflict” in naturalism—it relies on rational faculties that, by its own account, evolved for survival rather than metaphysical accuracy. The naturalist faces what Barry Stroud terms “irrecoverable circularity”: they must presuppose the reliability of faculties whose reliability they then try to explain through evolutionary processes.

Even sophisticated attempts to escape this circularity through epistemic externalism merely shift the problem. Reliabilism claims beliefs formed through reliable processes are justified regardless of whether we can prove their reliability. But this begs the question: how do we establish which processes are reliable without presupposing their reliability? At some point, non-empirical axioms must be accepted on non-natural grounds.

If naturalists retreat to pragmatism, accepting these axioms as “useful fictions” rather than truths, they have conceded that naturalism cannot justify its foundations within its own framework. This pragmatism is itself a non-empirical philosophical commitment that naturalism can neither justify nor dispense with.

Premise 2: Arbitrary Restriction of Inquiry

Naturalism’s second critical flaw lies in its arbitrary restriction of legitimate inquiry to natural causes alone.

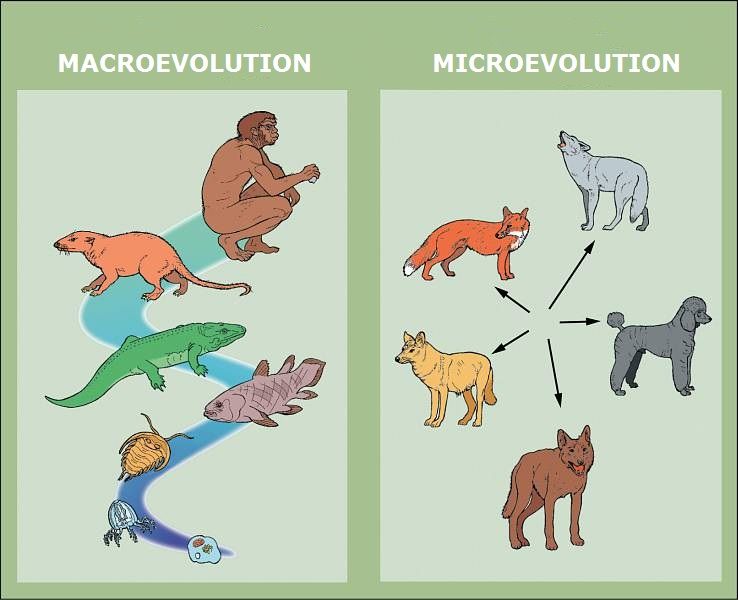

Philosophical naturalism makes an unwarranted leap from methodological naturalism (the practical scientific approach of seeking natural causes) to a metaphysical claim that only natural causes exist. This represents a category error—moving from a useful methodological heuristic to an ontological assertion without sufficient justification.

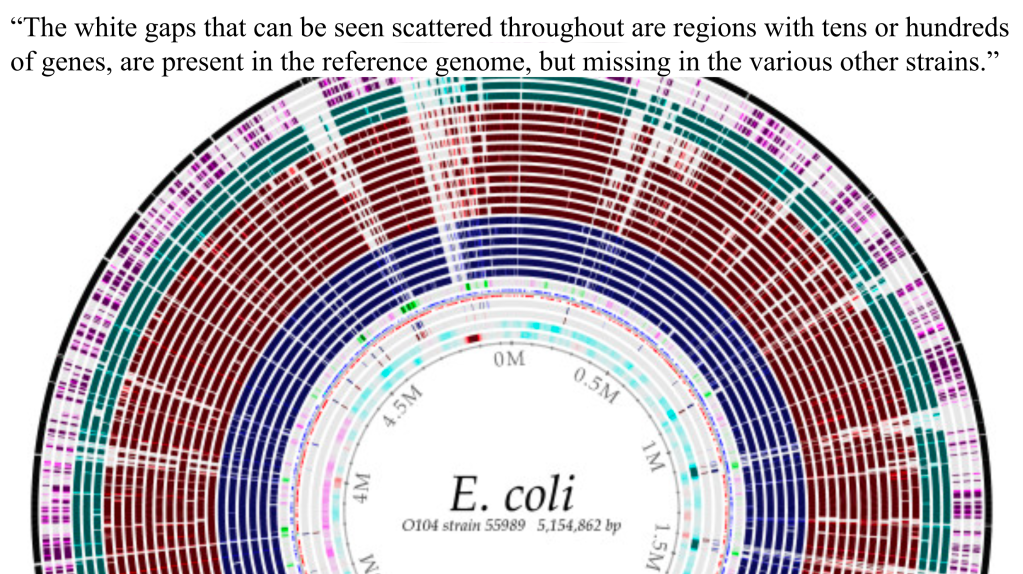

By defining reality exclusively in terms of what natural science can study, naturalism creates a self-fulfilling prophecy: it finds only natural causes because it defines all discoverable causes as natural by definition. This circular approach prejudices investigation rather than allowing evidence to determine the boundaries of reality.

The most powerful demonstration of this limitation is consciousness. Despite tremendous advances in neuroscience, the qualitative character of subjective experience—what philosopher Thomas Nagel calls the “what it is like” aspect of consciousness—resists reduction to physical processes. Neuroscience can correlate neural activity with reported experiences but cannot explain why these physical processes are accompanied by subjective experience at all.

This limitation isn’t temporary but structural—scientific methods are designed to study third-person observable phenomena, not first-person subjectivity. The scientific method, by its very nature, abstracts away subjective qualities to focus on quantifiable properties. This creates what philosopher David Chalmers calls the “hard problem” of consciousness—explaining how and why physical processes give rise to subjective experience.

Naturalists often respond by incorporating consciousness as a “fundamental” feature of an expanded natural framework—what Chalmers calls “naturalistic dualism.” But this semantic maneuver doesn’t resolve the ontological problem. If consciousness is fundamental and irreducible to physical processes, then reality includes non-physical properties—precisely what traditional naturalism denies. This exhibits what philosopher William Hasker calls “naturalism of the gaps”—expanding the definition of “natural” to encompass whatever resists reduction.

Unlike historical examples like electromagnetism or vitalism, which were unexplained physical phenomena eventually incorporated into expanded physical frameworks, consciousness presents a categorically different challenge—explaining how subjective experience arises from objective processes. This isn’t merely an unexplained mechanism but a conceptual chasm between fundamentally different categories of reality.

Premise 3: Explanatory Gaps

Naturalism’s third major flaw lies in its persistent failure to explain fundamental aspects of human experience, despite centuries of scientific progress.

Beyond consciousness, naturalism struggles to account for several phenomena central to human existence:

Intentionality: The “aboutness” of mental states—the fact that thoughts, beliefs, and desires are about something beyond themselves—resists physical reduction. Physical states aren’t intrinsically “about” anything; they simply are. Yet our mental states exhibit this irreducible directedness toward objects, concepts, and possibilities. Philosopher Franz Brentano identified intentionality as the defining characteristic of mental phenomena, creating an explanatory gap that naturalism has failed to bridge.

Rationality: Logical relationships between propositions aren’t physical connections but normative ones—they describe how we ought to reason, not merely how matter behaves. The laws of logic and mathematics exhibit a necessity that natural laws lack. Natural laws describe contingent regularities that could theoretically be otherwise; logical laws express necessary truths that couldn’t possibly be different. This modal difference creates another category distinction that naturalism struggles to accommodate.

Morality: Moral imperatives involve inherent “ought” claims that cannot be derived from purely descriptive “is” statements. As philosopher G.E. Moore identified, any attempt to define moral properties in natural terms commits the “naturalistic fallacy.” Evolutionary accounts may explain the origins of moral psychology but cannot justify moral claims as true or authoritative. If moral judgments are merely evolutionary adaptations, their normative force is undermined, creating what philosopher Sharon Street calls the “Darwinian Dilemma.”

Naturalists often respond to these gaps through eliminativism or emergentism. Eliminativism denies the reality of these phenomena, claiming they are illusions or folk-psychological confusions. But this approach is self-defeating—an illusion of consciousness must be experienced by someone, making consciousness inescapable. As philosopher John Searle notes, “Where consciousness is concerned, the appearance is the reality.”

Emergentism fares no better. To claim consciousness “emerges” from physical processes without explaining the mechanism of emergence merely restates the mystery. Unlike other emergent properties (like liquidity emerging from H₂O molecules), consciousness involves a transition from objective processes to subjective experience—a categorical leap, not a continuous spectrum. The naturalist must explain how arrangement of non-conscious particles yields consciousness, a challenge philosopher Colin McGinn calls “cognitive closure.”

These explanatory gaps aren’t temporary limitations in scientific knowledge but principled barriers arising from naturalism’s restricted ontology. After centuries of scientific progress, these gaps remain as profound as ever, suggesting a fundamental inadequacy in naturalism’s conceptual resources.

Premise 4: Inconsistent Verification

Naturalism’s fourth fatal flaw lies in its criterion for knowledge, which cannot justify itself without inconsistency.

The naturalist privileges empirical verification—the idea that meaningful claims must be empirically testable. Yet this verification principle itself cannot be empirically verified. It is a philosophical position, not a scientific discovery. This creates an internal contradiction: if we accept only what can be demonstrated through scientific methods, we must reject the very principle that demands such verification.

Even if naturalists reject strict verificationism, they still privilege empirical evidence above all else. Yet this privileging itself cannot be empirically justified. It’s a meta-empirical value judgment about what counts as legitimate evidence—precisely the kind of non-empirical philosophical commitment that naturalism struggles to account for.

Attempts to resolve this inconsistency through naturalized epistemology (following Quine) don’t solve the problem—they institutionalize it. Treating epistemology as a branch of psychology assumes the reliability of the psychological methods used to study epistemology. This creates what philosopher Laurence BonJour calls “meta-justification”—how do we justify our justificatory framework? Naturalized epistemology ultimately relies on pragmatic success, but this pragmatism itself requires non-empirical criteria for what constitutes “success.”

Even if we accept Quine’s web of belief, some strands in the web must be anchored independently of empirical verification. These include logical principles, mathematical truths, and the assumption that reality is comprehensible. These principles aren’t empirically derived but are preconditions for empirical inquiry. Their necessity reveals naturalism’s dependence on non-natural foundations.

Naturalism thus faces an inescapable dilemma: either it consistently applies its verification standards and undermines its own foundations, or it makes special exceptions for its core principles and thereby acknowledges limits to its explanatory scope.

The Inescapable Dilemma of Naturalism

These four premises reveal that naturalism faces an inescapable dilemma:

- Strict naturalism maintains a coherent ontology (only physical entities exist) but fails to account for consciousness, intentionality, rationality, and its own foundations.

- Expanded naturalism accommodates these phenomena but sacrifices coherence by stretching “natural” to include fundamentally non-physical properties.

This isn’t merely a limitation of current knowledge but a structural impossibility within naturalism’s framework. The problem isn’t that naturalism hasn’t yet explained consciousness; it’s that consciousness is categorically different from physical processes, requiring explanatory principles that transcend physical causation.

A “richer naturalism” that embraces consciousness as fundamental, accepts non-empirical axioms pragmatically, and incorporates abstract objects has abandoned naturalism’s core thesis that reality consists entirely of natural entities subject to natural laws. This isn’t evolution of inquiry but conceptual surrender.

Beyond Naturalism: The Case for Metaphysical Pluralism

The most coherent alternative to naturalism is metaphysical pluralism—recognizing that reality includes physical processes, conscious experience, abstract entities, and normative truths, without reducing any to the others.

This pluralistic approach acknowledges that different domains of reality require appropriate methods of investigation:

- Physical phenomena are best studied through empirical scientific methods

- Conscious experience requires phenomenological approaches that honor subjectivity

- Logical and mathematical truths demand rational analysis independent of empirical verification

- Normative questions involve philosophical reflection on values, not merely empirical facts

Unlike naturalism, pluralism doesn’t face self-defeat (it can ground rational faculties non-circularly), doesn’t arbitrarily restrict inquiry (it allows appropriate methods for different domains), and doesn’t face explanatory gaps (it acknowledges irreducible categories without eliminating them).

Naturalists often appeal to Ockham’s Razor (parsimony) and the practical success of science (pragmatism) as reasons to prefer naturalism over more metaphysically rich views like pluralism. However, as your text implicitly and explicitly argues, these critiques are problematic when leveled by the naturalist themselves, given the internal difficulties naturalism faces.

1. Problems with the Parsimony Critique:

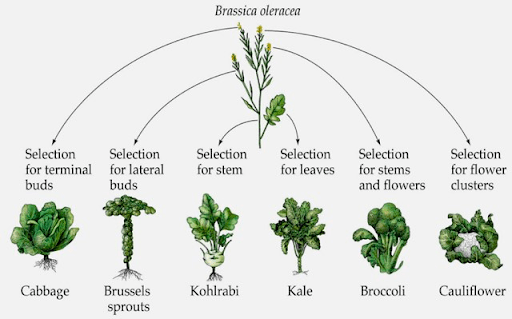

- False Parsimony: Naturalism’s claim to parsimony often amounts to ontological stinginess achieved by explanatory inadequacy. It claims to be simpler by positing only one fundamental kind of “stuff” (natural/physical). However, as your text details, this simplicity is bought at the cost of being unable to adequately account for or integrate crucial aspects of reality like consciousness, intentionality, rationality, and normativity (Premises 2 & 3). A theory that is simple but leaves vast swathes of reality unexplained is not genuinely more parsimonious than a theory that posits more fundamental categories but can actually explain or accommodate all the relevant phenomena. True parsimony should be measured not just by the number of types of entities posited, but by the overall complexity of the explanatory framework required to account for the data. Pluralism, by assigning different phenomena to different appropriate categories, might require a more diverse ontology but arguably a less strained and more comprehensive explanatory structure than naturalism, which must resort to eliminativism, mysterious emergence, or redefining terms to handle outliers.

- Redefining “Natural” Undermines Parsimony: Your text notes that naturalists trying to accommodate phenomena like consciousness might resort to calling it a “fundamental feature” within an “expanded naturalism” or “naturalistic dualism.” This is an attempt to absorb irreducible phenomena by broadening the definition of “natural.” But this move itself adds fundamental categories or properties to the naturalist ontology. If “natural” now includes irreducible subjective experience or fundamental abstract objects, the initial claim to radical simplicity (“only physical stuff”) is surrendered. This “naturalism of the gaps” (as your text puts it) demonstrates that naturalists, when pressed, do feel the need to add fundamental categories, thereby undermining their own parsimony argument against pluralism.

- Parsimony Itself is a Non-Empirical Principle: Ockham’s Razor is a meta-scientific or philosophical principle guiding theory choice. It’s not something discovered through empirical science. As your text argues in Premise 4, naturalism struggles to justify such non-empirical principles within its own framework. If the naturalist insists that all legitimate knowledge must be empirically verifiable or grounded, they face a difficulty in appealing to a principle like parsimony, which is a criterion of theoretical virtue, not an empirical fact. Using parsimony to critique pluralism requires the naturalist to step outside their own purported empirical-only standard, or at least rely on a principle they cannot ground naturally.

2. Problems with the Pragmatism Critique:

- Conflation of Methodological and Metaphysical Pragmatism: Naturalists often point to the undeniable success of science (which operates using methodological naturalism – seeking natural explanations within its domain) as evidence for metaphysical naturalism (the philosophical claim that only natural things exist). As your text argues in Premise 2, this is a category error. Methodological naturalism is pragmatic for the specific goal of studying the physical world empirically. Metaphysical naturalism is a comprehensive worldview claim. The pragmatism of the former doesn’t automatically transfer to the latter. Pluralism fully embraces methodological naturalism for understanding the physical realm but recognizes that other realms (subjective experience, logic, morality) require different, though equally valid, approaches.

- Pragmatism for What Purpose? If pragmatism means “what works as a comprehensive worldview,” then naturalism is arguably not pragmatic because it fails to provide a coherent or satisfactory account of fundamental aspects of human reality (consciousness, meaning, values, reason’s validity), as detailed in Premise 3. It might be pragmatic for building bridges or predicting planetary motion, but it’s arguably deeply unpragmatic for understanding what it means to be a conscious, rational, moral agent in a world with objective truths. Pluralism, by acknowledging different domains and methods, is arguably more pragmatically successful as a philosophical framework because it provides conceptual resources to engage meaningfully with the full spectrum of human experience and inquiry, not just the physically quantifiable parts.

- Naturalism May Rely on Pragmatism for its Own Foundations: Your text suggests (Premises 1 & 4) that naturalists, when pushed on how they justify the reliability of reason or the empirical method itself, might retreat to a pragmatic defense (“these methods just work”). If naturalism must ultimately appeal to pragmatism to ground its own core principles, it’s in a weak position to then turn around and critique pluralism solely on pragmatic grounds, especially when pluralism can argue it is more pragmatically successful in making sense of all of reality. This creates a kind of “pragmatism of the gaps” where pragmatism is invoked precisely where naturalism’s internal justification fails.

In summary, the naturalist critiques of pluralism based on parsimony and pragmatism often miss the mark. Naturalism’s parsimony is frequently achieved by ignoring significant data or by subtly expanding its ontology, undermining the claim to unique simplicity. Its appeal to pragmatism often confuses the success of scientific method (which pluralism utilizes) with the philosophical adequacy of metaphysical naturalism as a total worldview, and ignores naturalism’s own potential reliance on pragmatic grounds it cannot fully justify. Pluralism, while positing a richer ontology, can argue it offers a more genuinely explanatory parsimony and a more comprehensive pragmatism by acknowledging the irreducible complexity of reality.

Metaphysical pluralism doesn’t entail supernaturalism or theism by necessity. One can reject both naturalism and supernaturalism by acknowledging that reality may include non-physical aspects (consciousness, mathematical truths, values) without positing supernatural entities. Philosophers like Thomas Nagel, John Searle, and David Chalmers have developed non-materialist frameworks that don’t entail theism.

Conclusion

Naturalism fails as a comprehensive worldview. Its success in explaining physical phenomena doesn’t justify its extension to all aspects of reality. Its persistent explanatory gaps in consciousness, rationality, and value—coupled with its inability to justify its own foundations—reveal its fundamental inadequacy.

A truly rational approach follows evidence where it leads, even when it points beyond the boundaries of naturalistic explanation. This isn’t an abandonment of rationality but its fulfillment—acknowledging that different aspects of reality may require different modes of understanding.

Metaphysical pluralism offers a more coherent framework that honors the multidimensional character of reality. It maintains the empirical rigor of science within its proper domain while recognizing that human experience encompasses dimensions that transcend physical reduction. In doing so, it avoids both the reductionism of strict naturalism and the supernaturalism it rightly criticizes, providing a middle path that better accounts for the full spectrum of human knowledge and experience.